It takes years to build a reputation

and five minutes to ruin it.

Green Across the Board

arah’s Monday morning dashboard showed green across the board, the second of November and the first cool morning of the season. Fourteen people on staff. Fifteen active client engagements. Revenue run rate approaching $3.0 million.

The AI pipeline was humming: contracts flowing in, analyses flowing out, quality scores holding steady above 96 percent. David Park, her COO, had built a tracking system that made the whole operation feel like a well-tuned engine.

She should have been suspicious of how smoothly things were going.

The email from Meridian Pharmaceuticals arrived at 9:14 a.m., and it was the kind of email that makes a founder’s pulse quicken. Meridian was a mid-market pharma company headquartered in Scottsdale: $800 million in revenue, 2,400 employees, a product portfolio spanning generic medications and two branded drugs in late-stage clinical trials. Their deputy general counsel, Tom Kaufman, had seen Sarah present at a legal technology conference in Tempe. He wanted to discuss a regulatory compliance review.

Sarah called David into her office. “Meridian Pharma. They want a full FDA regulatory compliance review across their product lines. This could be a six-figure engagement.”

David leaned against the doorframe. In the less than two years since Alex had connected them, he had transformed the firm’s operations. He had built the pod system that now ran four specialized teams, onboarded Anika Sharma and Nolan Webb through the twelve-week certification program, and created the workflow documentation that turned intuitions about quality control into repeatable processes.

“Pharma regulatory,” he said. “That is new territory for us.”

“We’ve done compliance work before. The FinServ engagement went well and we have done regulatory work for hospitals as well.”

“But Hospital and Financial services compliance are not FDA compliance. FDA work is a different animal. Pharma is not just one set of rules applied to one company. Each manufacturing site has its own establishment registration, each product has its own approved scope, and the rules that apply to a specific document depend on which product is made where, under which registration, in which approved configuration. It is layered.”

“I know it’s layered. That’s why they’re coming to us instead of paying their current outside counsel three times as much to have associates read every page manually.”

David gave her a look she had come to recognize, the look that said I am not disagreeing with you, but I want you to hear what I am about to say.

“Let me scope it properly before we quote,” he said. “Give me two days.”

“We have a meeting with Tom Kaufman on Wednesday.”

“Then give me until Wednesday morning.”

Tom Kaufman was not what Sarah expected. She had pictured a corporate lawyer in his fifties, cautious, buttoned-up, the kind of deputy GC who had spent twenty years saying no to things. Instead, Tom was thirty-eight, with an engineering degree from Georgia Tech before his law degree from Emory. He had worked at the FDA for four years before going in-house. He understood both the science and the regulation, which made him the best and worst kind of client: best because he could appreciate what AI could do, worst because he would know immediately if it got something wrong.

They met in Meridian’s conference room: glass walls, a view of Camelback Mountain, the antiseptic tidiness of a pharma company that took regulatory appearances seriously. Tom had brought his senior regulatory affairs manager, a woman named Diane who took notes on a legal pad and said nothing for the first twenty minutes.

“I saw your presentation at the conference,” Tom said. “The demo was impressive. You showed how your AI pipeline could map regulatory requirements against documentation in minutes. That’s exactly what we need.”

“Walk me through the scope,” Sarah said.

“We have fourteen product lines manufactured across four sites: Scottsdale, Phoenix, Tempe, and a contract manufacturer in San Diego. Each site has its own establishment registration. Each product has its own approved manufacturing scope. We’ve moved three products between sites in the past two years through CBE-30 filings. We need a complete review to identify gaps before our next FDA inspection. The last inspection was eighteen months ago and we had two observations. Nothing critical, but leadership wants a clean sheet.”

“Timeline?”

“Eight weeks. We have the inspection window in Q1.”

Sarah glanced at David. He had his poker face on, which meant he was doing math in his head.

“What are you paying now for this kind of review?” Sarah asked.

Tom leaned back. “Marcus Chen’s group quoted $340,000 last time. Marcus has handled our regulatory work for years. Good lawyer, thorough. But it took twelve weeks. Junior associates buried in regulatory files, partners billing for supervision time, the usual grind.”

“We can do it for $175,000, fixed fee, in six weeks.”

Tom’s eyebrows rose. “That’s aggressive.”

“It’s our model. AI handles the document analysis, regulatory mapping, and gap identification. Our lawyers verify the output and provide the judgment layer. You get faster turnaround, lower cost, and a deliverable that’s structured for your team to act on.”

“Tell me about the verification process,” Tom said. He leaned forward, and Sarah noticed Diane had stopped writing. They were both listening carefully now.

Sarah walked them through the workflow: document ingestion, AI-powered regulatory mapping, automated matching against the Code of Federal Regulations, human review of flagged gaps, structured deliverable generation. She described the quality metrics, the error rates, the feedback loops that improved the system with each engagement.

Tom nodded slowly. “I’ll be honest with you. I’ve seen a lot of legal tech pitches. Most of them are vapor. Your demo was the first one where I thought the technology might actually work for regulatory compliance.” He paused. “But I need to understand something. Have you done FDA regulatory work before?”

“We’ve done extensive compliance work in financial services and healthcare operations,” Sarah said. “The regulatory mapping methodology is largely the same. We adapt the knowledge base for each regulatory domain.”

It was the right answer and also, Sarah would later realize, the wrong one. The methodology was the same in theory. In practice, FDA regulatory compliance had a specificity and an interconnectedness that her team had not fully appreciated.

Tom signed the engagement letter on Friday.

Eleven Errors: The Meridian Crisis

The first three weeks went well. Priya Chandrasekaran, Sarah’s CTO, had configured the AI pipeline for pharmaceutical regulatory analysis. Priya was thirty-five, a former software engineer at a legal technology company who had joined Sarah’s firm to build something from scratch rather than maintain someone else’s legacy code. She had assembled the technical architecture: the document ingestion system, the AI agent framework, the verification dashboard. She understood the pipeline’s strengths and limits better than anyone.

Priya’s team had loaded the relevant sections of the US Code, CFRs and other related regulatory materials into the knowledge base, ingested Meridian’s documentation set, and run test batches against sample regulatory files. The results looked clean. The AI was identifying gaps between Meridian’s documentation and regulatory requirements with what appeared to be high accuracy.

“Appeared to be” was doing heavy lifting in that sentence, though Sarah did not know it yet.

The problem was subtle. FDA compliance is not a uniform standard applied to a uniform company. Each manufacturing site operates under its own establishment registration, which defines the dosage forms and product classes that site is approved to produce. Each product has an approved manufacturing scope tied to specific facilities, specific processes, and specific equipment. When a company moves a product from one site to another, the regulatory scope follows the product, but only if the appropriate filings have been made and accepted. A requirement under 21 CFR Part 211 might apply at the Phoenix sterile-injectable line but be irrelevant at the Tempe oral-solid facility, because Tempe’s registration does not cover sterile manufacturing. Conversely, a requirement might apply at Tempe only because a product was transferred there six months earlier under a CBE-30 amendment.

Sarah’s AI pipeline was good at matching regulatory text against documentation. It was not built to model Meridian as four sites, four registrations, and a product portfolio that had shifted across those sites in the past two years. The pipeline treated the documentation set as a single corpus belonging to a single regulated entity. When a calibration protocol required under Part 211.68 was missing from a Tempe SOP, the AI flagged it, without checking whether the Tempe facility was even registered for the product class that triggered the requirement. When a sterile-process control was present at Phoenix and absent at Tempe, the AI flagged Tempe, missing that sterile manufacturing was not in Tempe’s scope, and that the real question was whether the recently transferred Product 7 had been properly documented under Phoenix’s registration after the move.

Candor OS saw documents and rules. It did not see which rules applied where.

Sarah’s QA process was designed for the kind of compliance work they had done before: verify each finding against the cited regulation and the cited document. Megan and Ryan handled the complex interpretive review, while Anika and Nolan verified individual gap identifications against the checklist protocols David had built. The team checked whether each flagged gap matched a real requirement and a real documentation absence. They did not have the domain expertise to ask the prior question: does this requirement apply to this site for this product under this registration?

The deliverable went to Tom Kaufman at the end of week five, a week ahead of schedule. Sarah was proud of the turnaround. The report was 180 pages, thorough, well-structured, professionally formatted. It identified 47 regulatory gaps across Meridian’s fourteen product lines, with severity ratings and remediation recommendations.

Tom’s response came three days later. Not by email. By phone.

“Sarah, we need to talk.”

His voice was calm, which was somehow worse than anger.

“Diane and I have been reviewing your report. The individual gap identifications, where they apply, are mostly accurate. But we’ve found problems with the scope of the analysis. Significant problems.”

Sarah’s stomach dropped. “Tell me.”

“Your report flags four findings against our Tempe facility related to sterile-process documentation. Tempe doesn’t manufacture sterile products. Tempe’s establishment registration covers oral solids only. Those four findings are noise, and noise like that in front of a reviewer makes them question every other finding in the report. Worse, your analysis cleared Phoenix on documentation requirements for Product 7. Product 7 was transferred from San Diego to Phoenix fourteen months ago. The transfer documentation is incomplete. Your report did not flag it because your pipeline treated Product 7 as a San Diego product based on the master product list it ingested. We found six other instances where your analysis applied a requirement to the wrong site, or missed a requirement because it had the wrong site-product mapping.”

Sarah was silent for a moment. “How many total findings are affected?”

“We’ve identified eleven scope errors so far. Diane is still reviewing. Some of them are minor, false positives we can document away. But three of them are material misses. The Phoenix Product 7 issue is the worst one. If we had relied on your report as delivered and walked into the inspection with that gap unaddressed, we’d be facing a Form 483 observation on transfer documentation, and the agency would be asking why we didn’t catch it.”

“Tom, I—”

“I’m not done.” His voice remained level. “I took a chance on you because your pitch was compelling. Your pricing was aggressive, your timeline was tight, and your technology was impressive. But I can’t take chances with FDA compliance. The consequences of getting this wrong aren’t a billing dispute or a client complaint. They’re warning letters, consent decrees, product recalls. My CEO doesn’t care how much money I saved if we get a Form 483 with findings we should have caught.”

“I understand.”

“Fix this or we’re done. And I need to understand what went wrong before I can trust any of the analysis that wasn’t flagged.”

Sarah hung up the phone and sat in her office for a long time. The dashboard on her screen still showed green across the board. She wanted to throw something at it.

David was in her office within ten minutes. Priya joined by video from her home office, where she had been debugging an unrelated pipeline issue.

“We have a problem,” Sarah said, and walked them through Tom’s call.

David’s response was immediate and operational. “How bad is it? Quantify.”

“Eleven scope errors identified so far. Three material. We don’t know the total yet because Tom’s team is still reviewing.”

“And the root cause?”

Sarah looked at Priya. “The pipeline doesn’t model the entity structure. It’s matching regulatory text against documentation as if Meridian were one company with one set of rules. Meridian is four sites, four registrations, fourteen products that move between sites. The pipeline doesn’t know which rules apply where. It’s been generating false positives at Tempe and false negatives at Phoenix because it never asked the prior question.”

Priya nodded slowly. “I configured the pipeline to retrieve regulations and match them against documentation. I didn’t build an entity-resolution layer. For FinServ, we didn’t need one as the relevant rules applied uniformly across the institution. For pharma, the rules apply at the establishment-product-process level. I built compliance checking. I didn’t build scope reasoning. The pipeline was treating four sites and four registrations as one regulated entity.”

“And our QA process didn’t catch it,” David said. It was not a question.

“No. Our lawyers verified each individual finding against the cited regulation and the cited document. They didn’t ask whether the requirement applied to that site for that product under that registration. That’s the question that needs domain expertise. They were checking whether each gap was real. They weren’t checking whether each gap was in scope.”

David wrote three words on the whiteboard in Sarah’s office: Scope. Pipeline. QA.

“Three failures,” he said. He pointed at the 4S Framework diagram that was still pinned above Sarah’s desk, the portfolio analysis tool he had introduced months earlier. “First, we scoped this as a Standardize engagement. High volume, defined process, AI-driven analysis with human verification. But pharmaceutical regulatory compliance is not Standardize work. The entity-scope complexity puts it squarely in Supplement territory. AI does the heavy lifting on document analysis, but mapping which rules apply to which sites, products, and registrations requires serious human expertise at every stage, not just at the end.”

Sarah felt the lesson land. They had been talking about the 4S framework (Standardize, Supplement, Specialize, Stop) for months. She had used it to design their service portfolio. And she had misclassified this engagement.

“Second,” David continued, “the pipeline was not configured for this type of regulatory structure. That is a technical problem with a technical fix, but it needs to happen before we deliver anything else.”

Priya was already taking notes. “I can build an entity-resolution layer that maps products to sites to registrations to applicable rule subsets. Two to three weeks. But I also need subject matter expertise to validate the entity graph: which products are actually made at which sites, which registrations cover which scopes, which transfers have been filed and accepted. I can build the plumbing, but I need someone who knows FDA establishment rules to tell me what the real entity structure looks like.”

“Third,” David said, “our QA process assumed that verifying individual findings was sufficient. For Standardize work, it is. For Supplement work, we need domain-expert review of the analytical framework, not just the individual outputs. We need a pharmaceutical regulatory specialist in the loop.”

Sarah stared at the whiteboard. Three failures. Each one traceable to a decision she had made. She had priced the engagement aggressively because she believed her pipeline could handle it. She had scoped it as Standardize because she wanted to prove that AI-native firms could take on complex work at scale. She had relied on generalist lawyers for QA because hiring a pharmaceutical regulatory specialist for a single engagement seemed inefficient.

Every one of those decisions had been rational in isolation. Together, they had produced a deliverable that could have damaged a client’s relationship with the FDA.

“Here’s what we do,” Sarah said. “I call Tom back today. I tell him exactly what went wrong, all three failures. Just like we have done when we have come up short in the past. No spin, no excuses. I tell him we’re redoing the analysis at no additional charge, and I tell him the timeline for the corrected deliverable. Then we fix the pipeline, fix the QA process, and deliver a report he can rely on.”

“And the cost?” David asked.

“We eat it. All of it. The rework, the specialist we need to hire on contract, the pipeline rebuild. Even if this engagement goes from profitable to a significant loss I do not care. We cannot take the reputational hit.”

David nodded. “It is the right call. If we lose the client, we lose more than the engagement. We lose a reference in pharma, and we lose credibility in a market we are trying to enter.”

“We might lose the client anyway,” Sarah said.

“Maybe. But we won’t lose the client because we were dishonest about what happened.”

David paused at the door. “One more thing. Do we need to notify our malpractice carrier?”

Sarah had not thought about insurance. She should have. “Yes. I’ll call the broker this afternoon.”

The broker call was brief. No claim threatened, no client action taken on the flawed report, corrected deliverable would resolve the exposure. The broker asked her to notify in writing and document the error, root cause, remediation, and client communication. “Probably stays a notification rather than a claim,” she said. “But your next renewal is going to include questions about your AI quality assurance process that weren’t on last year’s application. Underwriters are still figuring out how to price this.”

Sarah thanked her and hung up. The insurance industry was asking the same question she was: when AI produces the first draft and humans verify it, and the humans miss what the AI got wrong, where does the liability sit? The answer, for now, was with the firm. The question itself signaled that the profession was entering territory where the old frameworks, built for humans making human errors, would need to evolve.

She made one more call before calling Tom. Alex picked up on the second ring.

“We have a problem with Meridian,” Sarah said, and gave him the summary: eleven errors, three material, full rework at no charge, estimated $80,000 loss on a $175,000 engagement.

Alex was quiet for a moment. “What’s the client’s temperature?”

“Shaken. Not hostile. I’m calling him back today with the full diagnosis and remediation plan. No spin.”

“Good. Don’t spin it.” Alex paused. “Sarah, I’ve seen portfolio companies handle this both ways. The ones that minimize and deflect lose the client and the lesson. The ones that own it completely sometimes lose the client anyway, but they keep the lesson, and the market notices how they handled it.”

“We’re going to lose money on this. And it isn’t just the engagement. The specialist contract, the holiday engineering sprint Priya is going to push for, the Q1 cash burn. We were already running tighter than the dashboard makes it look.”

“You’re going to lose money on this engagement. That’s different from losing money on this client.” His voice carried the measured certainty of someone who had watched enough companies stumble to know which stumbles were fatal and which were formative. “At your stage, you can survive one Meridian. You cannot survive two. Fix the work. Fix the process. And make sure the next engagement in a new domain doesn’t repeat the pattern.” Alex paused. “One more thing. I’m bringing Margaret Adeyemi onto the board. Former GC at a Fortune 500 healthcare conglomerate, three acquisitions and a divestiture under her belt. She’ll formally join in Q2 and her first board call is going to be the corrected Meridian report. She is going to ask hard questions. That is what we want.”

Tom Kaufman listened to Sarah’s explanation without interruption. She walked him through all three failures: the scoping error, the pipeline limitation, the QA gap. She did not minimize or deflect.

When she finished, there was a long pause.

“I appreciate the honesty,” Tom said. “Most firms would have sent me a revised report with a cover letter saying they’d made minor corrections. You’re telling me the entire analytical framework had a structural flaw.”

“It did. And you deserve to know that, not a sanitized version of it.” Sarah paused. “We called this firm Candor. It would be a pretty short-lived name if we weren’t willing to live up to it.”

Tom almost laughed. “Fair enough.”

“What’s your plan?”

“We’re building an entity-resolution layer so the pipeline maps every requirement to the right site, product, and registration before it generates a finding. We’re bringing in Catherine Alvarez, a former FDA reviewer now consulting independently, to validate your establishment-product graph and review the corrected analysis. And we’re redesigning our QA process so that engagements with this level of regulatory complexity get domain-expert review at the framework level, not just the finding level.”

“Timeline?”

“Three weeks for the corrected deliverable. Still within your Q1 inspection window.”

“Cost?”

“No additional charge. We quoted a fixed fee and we’re going to deliver what we promised. If anything, we owe you a credit for the time your team spent identifying our errors.”

Another pause. “I’m not going to pretend this doesn’t shake my confidence. It does. But I’ve dealt with traditional firms that billed me $340,000 and missed things too. They just didn’t have a deputy GC who caught the mistakes.” Tom’s voice carried a wry note. “The difference is that when a traditional firm misses something, nobody talks about it. When an AI-native firm misses something, it becomes a referendum on whether AI can be trusted.”

“That’s fair,” Sarah said. “We need to earn that trust. We haven’t yet.”

“No, you haven’t. But the fact that you’re not trying to spin this tells me something. Fix the report. Fix your process. And then let’s talk about whether there’s a path forward.”

Sarah hung up and let out a breath she had been holding for what felt like minutes. She had not lost the client. Not yet.

Classify Before You Quote

The Meridian experience forced Sarah to reckon with something she had understood intellectually but had not internalized operationally: the 4S framework was not only a triage exercise. It was also a risk management tool. Getting the classification wrong did not just affect efficiency. It affected quality, client trust, and the firm’s reputation.

She spent the following weekend with David, re-examining every engagement they had completed and every service they offered. The question was simple: Where have we classified work as Standardize that should be Supplement? Where are we relying on AI-first workflows for work that requires human expertise throughout?

Standardize: Where AI Leads

Their low to medium contract review practice was genuinely Standardize work. High volume, defined scope and consistent inputs. The AI pipeline handled document ingestion, clause extraction, risk flagging, and summary generation. Lawyers verified the output.

Quality scores ran above 97 percent. Clients were satisfied. The economics were strong: 65 percent gross margins on fixed-fee engagements.

This was where the AI-native model sang. Predictable work, predictable quality, predictable economics. Sarah could quote fixed fees with confidence because she knew what the pipeline could do and where the human verification layer needed to catch errors.

Supplement: Where Humans and AI Partner

The Meridian engagement should have been classified here from the start. Supplement work benefited enormously from AI: the document analysis, the initial gap identification, the structured deliverable generation. But the analytical framework required human expertise that the AI could not provide. Mapping the establishment-product entity graph, validating that the right rules applied to the right sites under the right registrations, the judgment calls about severity and remediation priority: these demanded domain knowledge that went beyond what any current AI system could reliably deliver.

After the Meridian correction, Sarah restructured her Supplement workflow. AI still handled the first pass: document analysis, initial findings, draft scope mappings. But a domain expert now reviewed the analytical framework before the AI-generated findings went through detailed verification. The expert did not redo the AI’s work. The expert validated that the AI was asking the right questions, looking at the right scope, and applying each requirement to the right site and product. It was the difference between checking answers and checking the exam.

Specialize: Where Humans Lead

Some work remained firmly in human hands. Strategic regulatory advice (helping Meridian’s leadership decide how to respond to an FDA observation, structuring a remediation plan that balanced compliance with business constraints, advising on whether to challenge or accept an enforcement action) was Specialize work. AI could support it with research, analysis, and drafting, but the judgment was irreducibly human. Sarah priced this work at a premium that reflected the expertise involved and staffed it with her most senior lawyers.

The Classification Discipline

The lesson that crystallized from the Meridian failure was that AI-native did not mean AI-only. It meant AI-first where appropriate, AI-supported where necessary, and human-led where required. The discipline was in the classification, getting the category right for each piece of work, and in having the honesty to admit when you had gotten it wrong.

David built a classification checklist that every engagement had to pass before pricing and staffing. The checklist asked five questions about the work: Was the regulatory structure linear or networked? Were the quality criteria objective or judgment-dependent? Could errors be caught by verification of individual findings, or did they require systemic review? Was domain expertise required at the framework level or only at the verification level? Had the firm done substantially similar work before?

If the answers pointed to Supplement or Specialize, the engagement got different workflows, different staffing, and different pricing. No exceptions.

Nolan Webb made a smaller but telling contribution. Drawing on the lessons of the Meridian misclassification, he built a reference database of every past engagement’s classification: the initial assessment, the actual complexity encountered, and any reclassification that had occurred mid-engagement. The database became a training tool for new intake: before quoting any engagement, the team could search for comparable past work and see how the classification had played out. It was exactly the kind of institutional memory that David had always said distinguished a firm from a collection of practitioners.

Three weeks later, Sarah delivered the corrected Meridian report. The re-review had been a team effort, the kind of coordinated response that would have been impossible a year earlier. Ryan Gallagher led the process, spending three days re-checking every finding against the entity graph that Priya’s module had built. Megan Rivera, whose five years in pharmaceutical regulatory work made her the firm’s closest thing to an internal domain specialist, mapped the establishment-product graph by hand alongside Catherine Alvarez, tracing each of Meridian’s fourteen products to its current site of manufacture, the registration covering that site, the approved scope of that registration, and the CBE-30 history that explained how the portfolio had migrated over the past two years. The map they produced was the validation framework that even Priya’s module had not fully captured on the first pass. Anika Sharma ran systematic verification alongside them, checking each finding against the classification protocols with the methodical precision that had made her the fastest reviewer on the team. By the end of the rework she had effectively been running the verification track herself. David had already started talking to her about a pod lead role.

The corrected analysis identified 62 regulatory gaps, fifteen more than the original, because nineteen of the original findings were noise that the entity-resolution layer correctly stripped, and thirty-four genuine in-scope gaps had been missed by the unscoped pipeline. Catherine Alvarez had reviewed every finding and validated the entity graph. The report included a methodology section explaining the analytical approach, the AI-human workflow, and the quality assurance steps.

Sarah noted Joshua’s absence only in retrospect. Three months ago, he would have led this re-review, and he would have done it brilliantly, working alone through the nights until every site, registration, and product had been mapped by hand. But the team that did the work was a system, not a collection of individuals. Ryan’s leadership, Megan’s and Catherine’s domain expertise, Anika’s systematic verification: each contributed something that no single person, however talented, could have provided alone.

Tom Kaufman reviewed it over a weekend. His email Monday morning was brief:

“On first pass, the new report is thorough and accurate. This is what I expected the first time but whatever I guess let’s call it progress.”

Sarah read the email three times. Relief, then something sharper: the recognition that “this is what I expected the first time” was both praise and indictment. She had met the standard. She had not exceeded it. She had done what she should have done from the beginning, and doing it had cost her firm roughly $80,000 in rework, specialist fees, and pipeline development.

The call with Tom the following week was the beginning of the real relationship. Not the relationship that started with a conference presentation and an aggressive pitch. The relationship that started with a failure, an honest accounting of the failure, and the demonstration that Sarah’s firm could fix what it had broken.

“I want to be clear about something,” Tom said at the end of the call. “I’m not going to be your easy client. I’m going to push you. Every deliverable, every analysis, every site-product mapping. I’m going to check. Not because I don’t trust AI. Because I don’t trust anyone on the first try.”

“That’s fair,” Sarah said. “We’ll earn it.”

“You’re starting to. We actually have more of this work on the horizon but I also need to give this all a bit of think.”

David called the retrospective for Thursday afternoon. The entire team gathered in the conference room: Ryan, Megan, Anika, Nolan, Priya on video, and two engineers who had built the entity-resolution layer. David stood at the whiteboard where he had written Scope. Pipeline. QA. three weeks earlier. The words were still there. He had refused to erase them.

“We are going to talk about what happened, why it happened, and what we change,” David said. “This is not a blame session. This is a learning session. The three failures are on the board. Let us start with scope.”

The discussion was thorough and occasionally uncomfortable. Ryan was direct: “We scoped this as Standardize because the economics were better. If we’d scoped it as Supplement from the start, we’d have priced it at $200,000 – $225,000 or more, and Tom might not have signed.”

“Would we rather have a correctly priced engagement we did not win, or an incorrectly priced engagement we lost money on?” David asked. The question answered itself.

Anika raised the harder question. “Should we have taken this work at all? We had no FDA regulatory experience. No domain specialist on staff. The knowledge base had never seen pharmaceutical regulatory files. Were we qualified?”

The room went quiet. Sarah let the silence hold before answering.

“We were qualified to do the analysis. We were not qualified to do the analysis at Standardize speed and Standardize pricing. The work itself was within our capability. The corrected report proved that. The failure was in classification, not competence.” She looked at David. “But Anika is asking the right question. And the answer is that any engagement in a domain we haven’t worked in before gets Supplement classification by default. No exceptions. Even if the economics are less attractive.”

David wrote it on the whiteboard beneath the three failures: New domain = Supplement until proven otherwise.

“One more thing,” Priya said from the screen. “The entity-resolution layer we built for the rework. It’s better than what we had before. Not just for pharma. For any client whose compliance posture varies by site, business unit, product line, or jurisdiction. The Meridian failure made our pipeline stronger. That doesn’t excuse the failure. But it means the next client in any complex regulatory domain gets a better product than they would have gotten if Meridian had gone smoothly.”

David nodded. “That is the flywheel. Even failures feed it.”

Candor OS 2.0

The retrospective was supposed to end there: three lessons learned, a new classification rule, and a better entity-resolution layer. But Priya asked everyone to stay.

“I have been thinking about this since June,” she said from the screen. “Not just the past three weeks. The entity-resolution layer fixes the immediate problem. But I want to talk about the deeper problem, because if we do not address it now, Meridian will happen again. Different client, different domain, same failure mode.”

The room went quiet. Sarah had expected Priya to declare victory on the rebuild. This was not victory.

“The pipeline we built, Candor OS, is reactive at its core,” Priya continued. “It processes what we give it. Documents go in, analysis comes out. The retrieval layer finds relevant context. The workflow orchestration routes work through the right steps. The quality system catches errors. All of that works. But the system does not think about what it is doing. It does not plan. It does not ask itself whether it has enough information to answer the question. It does not notice when it is missing something.”

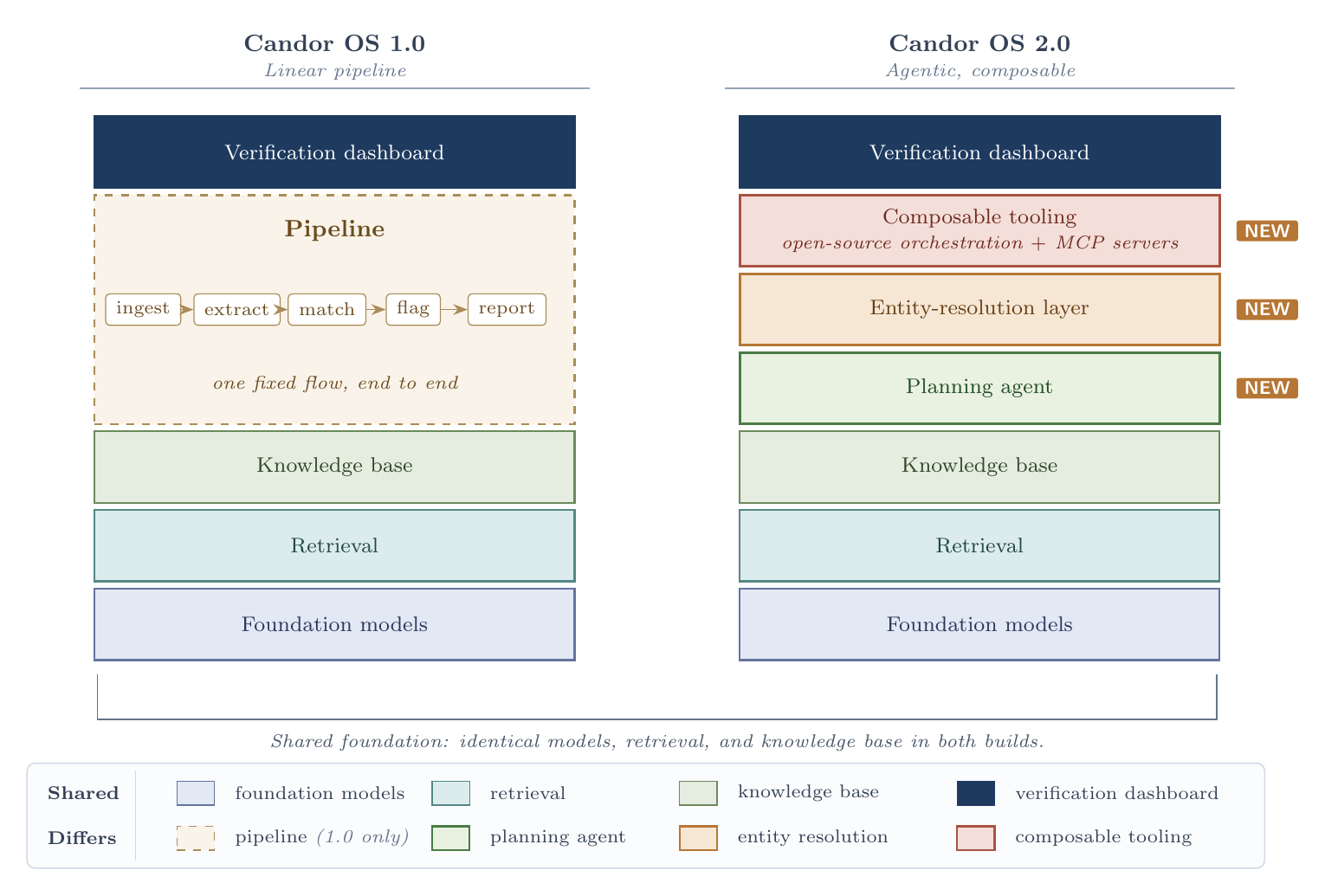

She shared her screen. A diagram appeared: the familiar five-layer architecture from the whiteboard presentation months earlier, but with red annotations marking the failure points from Meridian.

“The Meridian failure happened because the pipeline matched regulations to documentation without first asking which regulations applied to which parts of the company. It treated Meridian as one entity. It is not. A human regulatory expert would never start that way. A human would start by mapping the establishments, the registrations, the products, the transfers, building a model of which rules apply where, and only then begin checking documentation. Our pipeline skipped the mapping and went straight to the checking. It produced confident findings against the wrong scope.”

David leaned forward. “You are saying the pipeline does not plan.”

“Exactly. It executes. It does not plan. And for Standardize work (contract review, compliance checklists, defined-scope analysis), execution without planning is fine, because we have already done the planning when we designed the workflow. The workflow is the plan. But for anything that involves complex entity structures, scope-of-applicability reasoning, or novel analytical frameworks, the system needs to be able to plan its own approach before it starts executing.”

Sarah saw where this was going. “You want to rebuild the platform around agents.”

Priya nodded. “Although we have some agentic elements already in our stack, we need more. The foundational layers (the models, the retrieval system, the knowledge base) stay. They work. But Layer 3, workflow orchestration, needs to become something very different. Instead of predefined pipelines that route documents through fixed steps, we need agents that can receive a complex task, decompose it into subtasks, figure out what information they need, go get it, execute the analysis, check their own work, and revise when they hit something unexpected.”

“You described some of this when you first joined,” Sarah said. “The agent architecture, MCP, the planning layer. You said RAG was a hack to set up future agentic offerings.”

“I did. And at the time, neither the models nor the tooling were ready. The planning capability was too brittle. Agents would get stuck in loops, hallucinate subtasks that made no sense, or abandon their plan at the first unexpected input. The frameworks for orchestrating multi-step agentic work were research code. We were right to start with structured pipelines.” Priya paused. “Two things changed this year. First, the open-source ecosystem reached production quality somewhere around late spring. Agent orchestration frameworks, structured tool-use validators, MCP server implementations for half the data sources we care about, planning libraries that actually handle revision and recovery, all of it composable. Six months ago I would have had to build those primitives from scratch and I would not have built them well. Today I can compose them. Second, the models have kept improving at the same time. Claude in particular has gotten dramatically more reliable at extended reasoning and tool use over this same window. The plan-and-execute patterns that were brittle demos in 2025 are much closer to production-grade today. The two trends compound. Better tooling on top of better models is not additive. It is multiplicative. The Meridian failure is the signal that we have outgrown the pipeline architecture. We are trying to do agent-level work with pipeline-level tools, and the components to do it properly are now sitting on the shelf.”

She let that land before continuing.

“I have not been waiting for permission to explore this. I have been prototyping since last year. The agent that built the entity graph for the Meridian rework, that was not the production pipeline. That was a prototype I had running on my laptop. I patched it into the rework because we needed it. It worked.”

There was a long silence. Sarah looked at David.

“What does this cost?” she asked.

Priya had prepared for the question. “Six weeks. Two engineers and me. The reason it is six weeks and not six months is what I just told you. Most of the components already exist, and I consolidated a working build over the holidays under the pressure of the Meridian rework. The agent that produced the entity graph for the corrected report was running on that build. What I need now is focused engineering time to harden what I have, finish the orchestration layer, and stand up the production deployment. By the first week of March, the first agent-driven engagement goes live.”

“And during those six weeks?” David asked. “We are not going to stop taking Supplement work.”

“We keep the existing pipeline running for all current Standardize work: contract review, compliance monitoring, the volume business. Nothing changes there. For Supplement work, we handle the way we handled the Meridian rework: with human-driven analysis augmented by the existing pipeline plus the prototype agent layer where it helps. More expensive, slower, lower margin. But we do not ship another Meridian. The agent upgrade is the fix that prevents the next one.”

Sarah felt the familiar tension and then felt it dissolve as she registered what Priya had actually said. Priya was not asking for a leap of faith. She was asking for ratification. The work was already half done; the prototypes were already running; the components were already on the shelf. What had looked like a multi-month bet a year ago was now a six-week consolidation of work that had been quietly compounding since the prior year.

She remembered Alex’s words from the budget call that now felt like a lifetime ago: You are not a law firm that uses technology. You are a technology company that practices law. Fund it accordingly.

“What do we call it?” Sarah asked.

“Candor OS 2.0,” Priya said. “The pipeline version was 1.0, and it was the right architecture for where we were. Structured workflows, defined steps, human review at every checkpoint. That got us from zero to three million in revenue. But 2.0 is where the platform starts to reason. Agents that can plan complex analyses. Tools connected through MCP so the agents can reach into regulatory databases, client knowledge bases, document repositories, whatever they need, through a standardized interface. Extended reasoning for hard problems, where the system thinks longer and deeper before producing output instead of rushing to a first-pass answer. And a planning layer that decomposes complex engagements into subtasks before executing any of them.”

She pulled up a side-by-side comparison on her screen.

“In 1.0, if you give the system a pharmaceutical regulatory compliance review, it runs each document through a fixed pipeline: ingest, extract, classify, match against rule, flag, report. The pipeline does not know what it does not know. It cannot ask which site a document belongs to. It cannot ask whether a requirement applies. It cannot revise its analytical approach when it encounters something unexpected. It just executes the steps.”

“In 2.0, an agent receives the same task and starts by building an analysis plan. It identifies the entity structure first: which sites, which establishment registrations, which products, which approved scopes, which recent transfers. It maps the document set against that entity graph. Only then does it begin matching requirements against documentation, and the match is always scoped: this rule, at this site, for this product, under this registration. It executes the plan step by step, but it can revise the plan mid-execution when it discovers a transfer it did not know about or a registration scope that contradicts the document trail. And it checks its own work, running a verification pass that confirms each finding sits in the right scope before it goes to a human reviewer.”

“That is how Catherine worked on the Meridian rework,” David said quietly. “She built the establishment map first. Then she walked the products through it. Only then did she analyze the documents. The system should work the same way.”

“Exactly,” Priya said. “We are encoding Catherine’s analytical methodology into the platform. Not replacing Catherine. She still reviews the output, still applies judgment, still catches the things the agent misses. But the agent does the systematic work that Catherine should not have to do manually. The entity mapping, the scope reasoning, the gap identification, the verification: those are cognitive tasks that scale with AI and do not scale with humans.”

Sarah made the decision faster than she expected. The Meridian failure had cost them $80,000 and a client. The next Meridian, in a different domain with a different client, could cost them more. The agent architecture was not a nice-to-have. It was the infrastructure that would prevent the failure mode they had already experienced from repeating.

“Do it,” she said. “Candor OS 2.0. Six weeks. David, adjust the budget. Priya, I want a milestone plan by end of week: what ships when, what we can demo to the board, and when our first truly agent-driven engagement goes live.”

Priya’s expression shifted from advocacy to something closer to relief. She had been carrying the prototypes for six months, waiting for the right moment to bring them forward. The Meridian retrospective had been that moment.

“One more thing,” Sarah added. “When Tom Kaufman comes back, and I believe he will, I want Candor OS 2.0 to be what he comes back to. Not the patched version. The version that would have gotten it right the first time.”

The call came two weeks later. Sarah saw Tom’s name on her phone and felt the familiar tension, the knot that had lived in her chest since the eleven errors.

“Sarah, the corrected report was excellent. Diane and I have been through every finding. The scope mapping is solid. The methodology section alone was worth the engagement.”

“I’m glad. We learned a lot producing it.”

Tom was quiet for a beat too long. Sarah knew what was coming before he said it.

“Marcus Chen called me last week.”

Sarah closed her eyes. Marcus Chen, the regulatory lawyer whose firm had quoted $340,000 for the same work. The lawyer Tom had worked with for years before Sarah’s conference demo had caught his attention.

“He’d heard we were working with an AI firm on the regulatory review. He didn’t say ‘I told you so’ (Marcus is too professional for that), but he made a pitch. His team, his process, his twelve years of FDA experience. No AI in the loop. No pipeline to rebuild mid-engagement.” Tom paused. “Just lawyers who’ve done this work their entire careers.”

“Tom—”

“Let me finish. I appreciate what you did. The transparency, the rework at no charge, the honesty about what went wrong. That matters to me. It tells me something about your firm that I respect.” Another pause. “But I can’t take the risk. We have an FDA inspection coming. If we go in with gaps because an AI system applied the wrong scope to one of our sites, and the agency learns we used an AI-native firm for the compliance review, the optics alone could trigger enhanced scrutiny. Listen, I like what you are doing here and I am somewhat conflicted here but I need to go back to Marcus. I won’t lose my job for paying more for this type of work but I could lose my job if we blow it on an FDA inspection.”

Sarah did not argue. She did not pitch. She did not offer a discount or a guarantee or a follow-up pilot.

“I understand,” she said. “If the situation changes, we’ll be here.”

“I know you will.”

After she hung up, Sarah sat with the weight of it. She had done everything right: been transparent, fixed the work, absorbed the cost, earned the client’s respect. And she had still lost the engagement. Not because the corrected work was inadequate. Not because she had been dishonest. Because trust, once cracked, does not heal on the timeline the person who cracked it would prefer.

David found her staring at the whiteboard twenty minutes later. She told him.

“We lost Meridian,” he said. Not a question.

“We lost Meridian.”

David was quiet for a moment. Then: “The pipeline is better. The QA process is better. The classification discipline is better. We paid $80,000 and lost a client to learn lessons that will prevent us from losing the next ten clients. That is not a good trade. But it is not the worst trade either.”

“It doesn’t feel like a trade. It feels like a loss.”

“It is a loss. Losses are how firms like this get stronger, if they are honest about what went wrong. The ones that do not survive are the ones that pretend losses are just trades.”

Proof Over Promises

The Meridian experience transformed how Sarah thought about client acquisition. Before Meridian, her approach had been opportunistic: attend conferences, give demos, follow up with proposals. She closed deals on the strength of her pitch and the power of the demo. The pipeline was the star of every meeting.

After Meridian, she understood that the pipeline was necessary but not sufficient. Clients were not buying technology. They were buying outcomes, and outcomes required the right classification, the right workflow, the right people, and the right quality assurance. The technology was the enabler, not the product.

This shift in thinking changed her acquisition strategy.

Proof-Based Selling

Sarah had grown up professionally in a world where business development meant lunches, golf outings, bar association events, and the slow cultivation of relationships over months and years. A partner at her old firm had once told her that it took three years to convert a prospect into a client. “First year, they learn your name. Second year, they learn your work. Third year, they give you a matter.”

That model was not available to Sarah. She did not have three years. She did not have the brand of an established firm. She did not have a Rolodex of GCs who owed her favors. What she had was a platform that could demonstrate its capabilities in real time, quality metrics she could publish, and the willingness to put her money where her pitch deck was.

She developed what David called their “proof-based acquisition framework”: a system for converting skeptical prospects into clients through demonstrated capability rather than relationship cultivation.

The first element was the free pilot. Sarah offered prospective clients a limited-scope engagement at no charge. Not a demo. An actual engagement. She would take a subset of the prospect’s real work, run it through the pipeline, deliver a real work product, and let the prospect evaluate the quality against what their current providers delivered.

The pilots were expensive. Each one cost the firm between $5,000 and $15,000 in labor and compute, with no guarantee of conversion. David objected at first. “We cannot keep giving away work. Our runway is not infinite.”

“We’re not giving away work,” Sarah replied. “We’re investing in acquisition. Calculate the cost per pilot, the conversion rate, and the lifetime value of a converted client. Then tell me the ROI.”

David ran the numbers. The first quarter of pilots showed a 40 percent conversion rate. Average engagement value for converted clients was $85,000. Average lifetime value, projected over three years with expansion, was $280,000. Cost per pilot: $10,000. Cost of acquisition per converted client: $25,000.

“That is a healthy LTV-to-CAC ratio,” David said. “Better than most SaaS companies.”

“And it gets better,” Sarah said. “The pilots that don’t convert still generate robustness checks for our pipeline and content for case studies. Nothing is wasted.”

The second element was the money-back guarantee. For clients who were not ready for a free pilot but wanted risk protection, Sarah offered a straightforward guarantee: if the deliverable did not meet specified quality standards, the client paid nothing. Full refund, no questions asked.

The concept was straightforward: AI-native firms must compete on proof, not relationships, because they lacked the relationship capital that traditional firms had accumulated over decades.

In eighteen months, Sarah had never actually had to return money. Both times that it was close, the issue was a scope misunderstanding rather than a pure quality failure. Both times she offered a refund, the clients ultimately re-engaged with clearer scope. The guarantee basically cost her nothing in practice but removed enormous friction from the sales process.

The third element was published quality metrics. Sarah published her firm’s quality scores (accuracy rates, turnaround times, client satisfaction scores) on her website. Not vague testimonials. Actual numbers. Updated quarterly.

“You are making yourself vulnerable,” David said. “If the numbers dip, everyone will see it.”

“Good. It keeps us honest. And it signals to prospects that we’re confident enough in our quality to make it public.” Sarah paused. “When was the last time a traditional law firm published its error rate?”

David conceded the point.

The Acquisition Funnel

The proof-based elements fed into a structured acquisition funnel that Sarah and David built together. The funnel had five stages, and they tracked conversion rates at each one.

Awareness came through conference presentations, published content, and word of mouth. Sarah wrote articles about AI-native legal service delivery, not marketing fluff but substantive pieces about methodology, quality assurance, and the realities of human-AI collaboration. She was honest about limitations, which paradoxically built more credibility than claiming perfection.

Interest materialized when prospects reached out, usually after reading an article or hearing a conference talk. Sarah’s team responded within 24 hours with a brief, customized overview of how their services applied to the prospect’s specific situation. No generic brochures. No “let me put together a proposal and get back to you next week.”

Evaluation happened through the free pilot or a detailed capability presentation. Sarah had learned from the Meridian experience to be rigorous during evaluation about classifying the prospect’s work correctly. If the work was Supplement or Specialize, she said so, and explained what that meant for workflow, staffing, and pricing.

Decision was the moment the prospect chose to engage. Sarah made this easy: clear pricing, defined scope, quality guarantees, fast contracting. Her engagement letters were four pages, not forty. The terms were fair to both sides.

Expansion, the work of turning a single engagement into a recurring relationship, was where the real value lived. Sarah tracked expansion carefully, and she found that clients who came through the proof-based funnel expanded at nearly twice the rate of clients who came through traditional business development.

The reason was straightforward: by the time a proof-based client signed an engagement letter, they had already seen the work product. Their expectations were calibrated to reality. There were no surprises, which meant there were no disappointments, which meant they were willing to expand scope.

Acquisition Metrics

David built an acquisition dashboard that tracked the economics of client development with the same rigor that a SaaS company would track its sales funnel. Cost of acquisition, or CAC, measured everything the firm spent to land a new client: marketing, business development time, pilot costs, and the opportunity cost of Sarah’s own time on pitches. The number settled at roughly $25,000 per new client, which was high for a small firm but sustainable given the lifetime value on the other side.

Conversion rates measured the drop-off at each funnel stage. The biggest loss was between awareness and interest. Many people heard Sarah speak at conferences but never reached out. The smallest loss came between evaluation and decision. Once prospects saw the work product, most engaged. This pattern told Sarah where to invest: more pilots, fewer brochures.

Time to close measured how long the acquisition process took. Traditional firms measured this in months or years. Sarah’s proof-based model closed new clients in four to eight weeks on average. The pilots accelerated the timeline because they replaced months of relationship cultivation with days of demonstrated capability.

These metrics connected directly to the economics Maya had taught Sarah in that restaurant in the West Village: lifetime value, customer acquisition cost, the ratio between them. The AI-Era Client Model from the analytical framework was not abstract. It was the operating system for how Sarah’s firm grew.

On a Tuesday morning in mid-May, roughly four months after Sarah had lost Meridian, her phone rang. Tom Kaufman.

She almost did not pick up. The last time they had spoken was the call where he told her he was going back to Marcus Chen. She had replayed that conversation more times than she wanted to admit.

“Sarah. I need to talk.”

“I’m here.”

“I’ve been meaning to call for a few weeks now. People in my network kept mentioning your firm: the platform you spent the winter rebuilding, the agent-driven engagements they had been hearing about, the entity-resolution work that was becoming a quiet reference point in pharma regulatory circles. By March I was hearing it from three or four people. By April it was hard to ignore.”

Sarah said nothing.

“Then Marcus Chen’s group completed our pre-inspection review last month. Fourteen weeks. $380,000. The work was professional. Marcus is a good lawyer, I’ve said that from the beginning.” Tom paused. “But Diane found two gaps that your corrected report had caught and Marcus’s team missed. Both involved scope-of-applicability questions: requirements that applied to a specific site under a specific registration, not the version of the rule Marcus’s associates were checking against. The same class of problem your original pipeline had gotten wrong, except your rebuilt pipeline now gets it right and Marcus’s team apparently does not always get it right either.”

Sarah said nothing. She was doing math in her head, and the math was telling her something she had not expected.

“I also looked at doing it ourselves,” Tom continued. “Diane and I spent a weekend with one of the AI platforms, ran a subset of our regulatory files through it, tried to replicate what your pipeline does. The technology is impressive. We could probably get eighty percent of the way there.” He paused. “But eighty percent on FDA compliance is not a number I can live with. And if something goes wrong, if we miss a gap and the agency finds it, there’s no one to call. No malpractice coverage. No firm standing behind the work. Just us, explaining to our CEO that we used a software tool instead of a lawyer and it missed something material.”

Sarah recognized the calculus. It was the same one Allison McLindon had worked through eighteen months earlier when she chose Candor’s per-unit BAA review over consolidating with one of her four traditional outside counsel. Allison had run the math the same way Tom was running it now: the tools were accessible, but the liability and the verification layer were not. Every sophisticated in-house team eventually arrived at the same conclusion.

“Here’s what I realized,” Tom said. “Your firm made a mistake and built a system to catch that type of mistake. Marcus’s firm made the same type of mistake and didn’t know they’d made it. And doing it in-house means owning every mistake ourselves with no insurance and no expert review. I would rather work with a bar-admitted firm that uses the best tools and has the system to find its own errors than a traditional firm that doesn’t know the errors exist, or a software platform that doesn’t carry the liability.”

“Tom—”

“I’m coming back. But I want to do this differently. Not a one-off engagement. A subscription. Monthly retainer for ongoing regulatory monitoring and compliance support. I want your pipeline, the improved version with the entity-resolution layer, running against our regulatory files continuously. And I want a senior lawyer reviewing the output every month.”

Sarah’s throat tightened. She had lost this client. She had mourned this client. And now he was coming back, not because she had pitched him, not because she had offered a discount, but because the system she had built to fix a failure had proven better than the system that had never failed visibly.

“We can do that,” she said. “$28,000 per month. Fixed fee. Senior lawyer review included.”

“Done. And Sarah, I’ve been talking to the deputy GC at Cascade Biologics. They need a regulatory compliance review before their fall filing deadline. I told her about your firm. The whole story: the eleven errors, the transparency, the rework, the fact that I left and came back.” He paused. “She said it was the most honest vendor reference she’d ever heard. She wants a proposal by Friday. And Sarah, she mentioned eight hundred thousand in annual outside regulatory spend she wants to consolidate with one firm. Your firm, if the first engagement goes well.”

After hanging up, Sarah sat with the weight of what had just happened. Tom Kaufman had become not just a retained client but an active advocate, the kind of client that every professional services firm dreams about. And it had started with a failure, survived a loss, and returned as something stronger than it would have been if things had gone smoothly the first time. Linda Torres had taught her two years earlier that transparency about a flawed deliverable could win trust. Tom had taught her the harder lesson: transparency at speed, under reputational pressure, with the cost absorbed visibly, was what won the client back when transparency alone could not.

The advocacy phase of the client lifecycle, she realized, was not something you could engineer. You could not manufacture reference clients through excellent marketing or clever incentive programs. You earned them by doing good work, being honest when you fell short, and demonstrating that your firm could learn from its mistakes, even when the learning cost you the client. Tom had not become an advocate because the technology impressed him. He had become an advocate because the full arc (failure, honesty, loss, improvement, return) had shown him something that a flawless engagement never could have.

Revisiting Our SKUs

By the time Meridian arrived, fixed-fee and per-unit pricing were already the operating defaults for Sarah’s Standardize practice, the conventions of the model she had built since the firm’s first engagements. What the post-mortem forced into focus was what to do with everything that did not fit Standardize: ongoing client relationships, complex Supplement work, and irreducibly human Specialize advice.

Subscription: The Relationship Model

For clients with ongoing needs, Sarah introduced subscription pricing: a monthly or annual fee for access to a defined scope of services. The subscription included a baseline level of work product plus access to the firm’s platform for self-service analytics and reporting.

The subscription model changed the client relationship from transactional to ongoing. Instead of engaging Sarah’s firm matter by matter, subscription clients had a continuous relationship. They sent work as it arose, without the friction of new engagement letters and pricing negotiations.

The economics of subscriptions worked for both sides. Clients got predictable costs and immediate access to services. Sarah got predictable revenue and higher retention. A subscription client was far less likely to shop alternatives for each new matter than an engagement-by-engagement client.

David modeled the economics carefully. Subscription pricing required estimating average utilization: how much work a typical subscription client would send per month. Price too low, and the firm lost money when clients sent heavy volumes. Price too high, and clients felt they were not getting value. Sarah set subscription prices based on historical utilization data, with quarterly true-ups that adjusted the fee if actual usage deviated materially from estimates.

Within a year, subscription revenue represented 35 percent of the firm’s total revenue. The stickier metric: subscription clients had a 94 percent annual retention rate, compared to 78 percent for engagement-by-engagement clients.

Supplement and Specialize Pricing

The Meridian experience taught Sarah that Supplement and Specialize work required different pricing logic. These engagements had higher costs (domain specialists, more extensive human review, additional quality assurance steps) and carried more risk. Pricing them like Standardize work was a recipe for the kind of loss she had taken on Meridian.

For Supplement work, Sarah used enhanced fixed fees, higher than Standardize pricing, reflecting the additional human expertise involved. She was transparent about the premium: “This engagement involves regulatory analysis that requires domain specialist review. The fixed fee reflects that requirement. If you want a lower price, we can discuss reducing scope to the elements that fit our Standardize workflow.”

Most clients chose the Supplement price. They understood that complex work cost more, and they appreciated that Sarah was honest about why rather than burying the cost in opaque hourly bills.

For Specialize work, Sarah experimented with value-based pricing: fees tied to the value of the outcome rather than the cost of the work. When she advised a client on a regulatory strategy that avoided a potential FDA enforcement action, the value to the client was measured in millions of avoided costs. Pricing that work at a flat fee based on hours would have left enormous value on the table. Value-based pricing captured a share of the value created, aligning the firm’s interests with the client’s outcomes.

Value-based pricing was harder to implement than fixed or per-unit models. It required understanding the client’s situation well enough to estimate the value at stake, which demanded the kind of deep client knowledge that came from ongoing relationships rather than one-off engagements. Sarah reserved value-based pricing for her most established client relationships, clients like Tom Kaufman who trusted her enough to have the conversation about value.

The Pricing Matrix

David synthesized the pricing evolution into a matrix that mapped pricing models to service classifications.

| Classification | Primary Pricing | Margin Target | Risk Profile |

|---|---|---|---|

| Standardize | Per-unit or fixed fee | 60–65% | Low |

| Supplement | Enhanced fixed fee | 40–50% | Moderate |

| Specialize | Value-based or premium fixed | 35–45% | Higher |

| Subscription* | Monthly retainer | 50–60% | Low (recurring) |

The matrix was not rigid. Some engagements warranted hybrid approaches or exceptions. But it provided a starting point that prevented the kind of misclassification error that had cost Sarah the Meridian margin. Every new engagement went through the classification checklist before pricing, and the pricing model followed from the classification.

Platforms Are Not the Only Thing That Compounds

The final phase of the client lifecycle, Expand, was where Sarah’s firm generated its best economics. Tom Kaufman was the model.

His initial engagement was a $175,000 regulatory compliance review that lost $80,000 in rework. Then she lost the client entirely when Tom returned to Marcus Chen’s firm. Roughly four months later, in mid-May 2027, Tom came back as a subscription client paying $28,000 per month for ongoing regulatory support, $336,000 annually. The relationship also generated the Cascade Biologics referral within weeks, which became a $120,000 engagement in its own right.

From a $175,000 engagement that lost $80,000 and then lost the client to a relationship generating more than $450,000 in annual revenue plus referrals. The path was not linear. But the temporary loss had not been wasted: the pipeline rebuild and specialist network that Candor had developed during the Meridian rework survived Tom’s departure, improving every subsequent engagement in complex regulatory domains.

Five months after the Meridian crisis, Sarah sat in her office reviewing the Q1 2027 dashboard. The numbers were strong, stronger than they had been before the failure, though the path had been anything but smooth. Revenue had grown steadily from the crisis trough, the run rate climbing month over month. Margins on Standardize work held at 63 percent. Supplement margins were trending toward 44 percent as the redesigned workflow took hold. Client satisfaction scores averaged 4.6 out of 5, with Meridian (the client she had lost and earned back) rating the first month of the new subscription at 4.8. Net revenue retention had crossed 124 percent, something David had explained meant existing clients were spending faster than any new client could leave. And pharma regulatory was now the firm’s largest vertical by revenue: Tom’s subscription anchored it, Cascade Biologics was about to ramp, and David was already mapping the next set of mid-market biotech prospects.

David knocked on her doorframe. “Tom Kaufman is on the phone. Says he has another referral.”

Sarah smiled. “Put him through.”

Before she picked up, she looked at the framed index card on her desk. She had written the first line the week after the Meridian crisis. She had added the second line the day Tom told her he was going back to Marcus Chen. Both lines were in her own handwriting:

Classify before you quote.

Trust takes longer than you think.

The first lesson was about discipline. AI-native did not mean AI-everything. It meant knowing where AI led, where humans and AI partnered, and where humans led, and having the courage to price, staff, and deliver accordingly.

The second lesson was about patience. You could do everything right (be transparent, fix the work, absorb the cost) and still lose the client. Trust was not a transaction. It was an arc, and sometimes the arc bent away from you before it bent back.

Tom’s voice came through the speaker. “Sarah, I’ve got two more companies for you. But they want to hear the full story. Not just the eleven errors and the fix. They want to hear that you lost me and earned me back. That’s a better reference than never having failed at all.”

“I’m happy to tell the whole story,” Sarah said. “The ending doesn’t work without the middle.”

The model had survived its first crisis. The firm was building a bench. The platform was compounding. And Tom Kaufman was on the phone with another referral. Trust, it turned out, was compounding too.